Sparse Signal Processing

What are Sparse Signals?

A sparse signal is a signal which has a representation in some domain where the number of non-zero elements in that domain is significantly lower than the signal length . In other words, these signals can be represented digitally with a high degree of accuracy by relatively few non-zero coefficients. Many of the signals occurring in nature related to human life are sparse. In signal processing, this constraint can be exploited for a diverse range of applications using the concept of compressive sensing, which reconstructs the sparse signal from a reduced set of measurements. This field is of much interest among researchers and engineers due to the algorithms and applications involved and the continuing expansion of the boundaries of its capabilities.

What are the Algorithms?

To this end, we consider the following data acquisition model.

y=Ax+z,

Here, the observation vector of length k is given by y and x is the sparse signal of length m. Our objective is to estimate x from the noisy measurements y. The matrix A is a linear operator (also known as Dictionary), that maps the vector space ℜm to vector space ℜk with m k. The additive observation noise is given by z. Since x is sparse, that property can be utilized to estimate x as follows [Nat95],

In the above equation, the L0-norm of a vector is represented by ∥·∥0 and it is equivalent to the number of nonzero elements in the vector. Also, the the noise bound is given by ϵ. The above optimization problem is non-convex and NP-hard [Nat95]. The most common approach to tackle above NP-hard problem is replacing the L0-norm with L1-norm (absolute sum of elements) [CRT06a] and the new transformed problem is known as basis pursuit denoising (BPD) [CDS01] or least absolute shrinkage and selection operator (LASSO) [Tib96].

Further, under reasonable conditions, an NP-problem could be solved numerically. Some examples are the direct search method, matching pursuit approaches [MZ93], base pursuit approaches, and thresholding approaches like iterative soft thresholding algorithm (ISTA) [DDDM04] and fast ISTA [BT09].

It is worth considering how the demand for real-life applications means that very efficient algorithms are needed for time critical applications. This also includes developing new algorithms which are capable of obtaining signal reconstructions from even fewer data and which have a better tolerance to noise and signal non-sparsity.

What are the Applications?

The applications in sparse signal processing are numerous, including signal processing, image processing, computer vision, machine learning, and data compression. It is useful not only in compression but also in cases where obtaining a complete number of samples of a signal is either expensive, inconvenient, or even impossible. The incomplete signal can be used to reconstruct the original sparse signal. Applications of this nature include image and audio reconstruction and in Radar signals, and ECG signals.

Images can generally be assumed to be sparse in their two-dimensional discrete cosine transform representation. The pixels of an image may be corrupted due to noise. These corrupted pixels can be detected and removed. The image can be reconstructed later using the reduced set of measurements. This is possible by exploiting the sparsity property of common images. The reconstruction of a corrupted image by using the gradient algorithm is shown in the figures below.

(Original image)(Figure 1)

‘

(Corrupted image)(Figure 2)

(Reconstructed image)(Figure 3)

Image is adapted from “A Tutorial on Sparse Signal Reconstruction and its Applications in Signal Processing” by Ljubiša Stankovic, Ervin Sejdic, Srdjan Stankovic, Miloš Dakovic, and Irena Orovic

In Inverse Synthetic Aperture Radar(ISAR), a two-dimensional Fourier transform of the received signal is used to construct the image of a target body. The image is a highly concentrated two-dimensional function. It has peaked at the target range and cross-range positions of the body. The number of non-zero pixel values in the image is much lower than the total number of pixels present. Hence it can be considered sparse. Therefore, any unavailable or corrupted data can be compensated by reconstructing the signal from received error-free data. The usage of a reduced set of radar signals to reconstruct the original radar signal is shown in Figure (4).

Figure (4) – A single iteration based algorithm applied to the simulated radar signal applied to a simulated radio signal. Image is adapted from “A Tutorial on Sparse Signal Reconstruction and its Applications in Signal Processing” by Ljubiša Stankovic, Ervin Sejdic, Srdjan Stankovic, Miloš Dakovic, and Irena Orovic

Where to next?

Even though many such applications and algorithms have been developed, there are many unsolved questions existing in this field. For example, there is a need for computationally efficient uniqueness tests and optimal testing strategies. The context behind compressive sensing has also been changed in newer models as the constraints involved in sparse signal reconstruction are relaxed to allow inaccurate data measurements. Examples included modifications for dealing with wideband analog signals and reconstruction methods that adapt as new measurements are obtained.

Compressive sensing is especially exciting due to its interdisciplinary nature. There are many papers considering multiple aspects such as algorithm development, theoretical problems and possible applications. The discoveries and inventions made in the fields of applied mathematics, pure mathematics, and engineering act together to propel the frontiers of compressive sensing forward. The field continues to be of great interest as more applications and algorithms are yet to be discovered.

References

[Nat95] B. K. Natarajan, “Sparse approximate solutions to linear systems,” SIAM J. Comput., vol. 24, no. 2, pp. 227–234, 1995.

[CRT06a] E. J. Candes, J. K. Romberg, and T. Tao, “Stable signal recovery from incomplete and inaccurate measurements,” Commun. Pure Appl. Math.: A Journal Issued by the Courant Institute of Math. Sci., vol. 59, no. 8, pp. 1207–1223, 2006

[CDS01] S. S. Chen, D. L. Donoho, and M. A. Saunders, “Atomic decomposition by basis pursuit,” SIAM review, vol. 43, no. 1, pp. 129–159, 2001.

[Tib96] R. Tibshirani, “Regression shrinkage and selection via the LASSO,” J. R. Stat. Soc.: Series B (Methodological), vol. 58, no. 1, pp. 267– 288, 1996.

[MZ93] S. Mallat and Z. Zhang, “Matching pursuits with time-frequency dictionaries,” IEEE Trans. Signal Process., vol. 41, no. 12, pp. 3397– 3415, 1993

[DDDM04] I. Daubechies, M. Defrise, and C. De Mol, “An iterative thresholding algorithm for linear inverse problems with a sparsity constraint,” Commun. on Pure and Applied Math.: A Journal Issued by the Courant Institute of Math. Sci., vol. 57, no. 11, pp. 1413–1457, 2004.

[BT09] A. Beck and M. Teboulle, “A fast iterative shrinkage-thresholding algorithm for linear inverse problems,” SIAM journal on imaging Sci., vol. 2, no. 1, pp. 183–202, 2009.

Read MoreGraph Signal Processing

Introduction

Signals are everywhere. In the present world, signal processing is likely to be changing into information processing with the introduction of new research trends like Topological signal processing, Graph signal processing, Data-Driven approaches for imaging systems (including neural networks), Data-Driven beamforming techniques for 6G and Beyond communication systems, latest video compression standard VVC and some new ones to many models like Multimodal Speech recognition. Thanks to some ground-breaking inventions such as the Fast-Fourier Transform, linear filters, and Kalman filter, which are distinctive to open the pathway of such overwhelming trends. Here, we are going to do a brief discussion on Graph signal processing.

First of all, what is signal processing? Why is it considered to be one of the pioneering fields for research? The answers to these questions lead us to the following discussion. The representation, analysis, and manipulation of signals are dealt with within the field of engineering and applied mathematics, known as signal processing. Any quantity that carries information is a signal, including time-varying voltages, sound waves, and images. Signal processing’s objective is to extract information from signals and portray it in a more practical and understandable manner. Filtering, Fourier analysis, compression, and feature extraction methods are used to achieve this. Numerous industries, including telecommunication, voice and image processing, control systems, and biomedical engineering, use signal processing in various ways. Signal processing uses mathematical techniques and models to analyze and alter signals to extract useful information, enhance signal quality, or decrease the quantity of data required to describe the signal. As the requirements and capacities of different application areas evolve, new approaches are continually being created in the sector.

Graph Signal Processing

Data is everywhere in massive quantities in the present technological world. Almost every aspect of our day-to-day life is recorded at one or many different kinds of levels. Our personal health data are recorded and processed through health monitoring devices and apps, various types of financial and banking data, our web searches, our social networks, and our mobility by traffic patterns. Each of these data is being recorded by any means. The complexity of such networks and interactions means that the data now resides in more irregular and complex structures. So to process these kinds of complex data into precise, meaningful information, we need new tools.

In Simple words, Graph signal processing (GSP) can be briefly described as a branch of signal processing, concerned with the study and control of signals defined on graphs. The interactions between the signal samples in GSP are modeled as graphs, which may subsequently be examined using graph spectral theory, graph filters, and other graph-based processing methods. In order to handle signals with underlying structures such as those that emerge in social networks, brain connections, and other complex systems, GSP aims to expand the capabilities of conventional signal processing techniques.

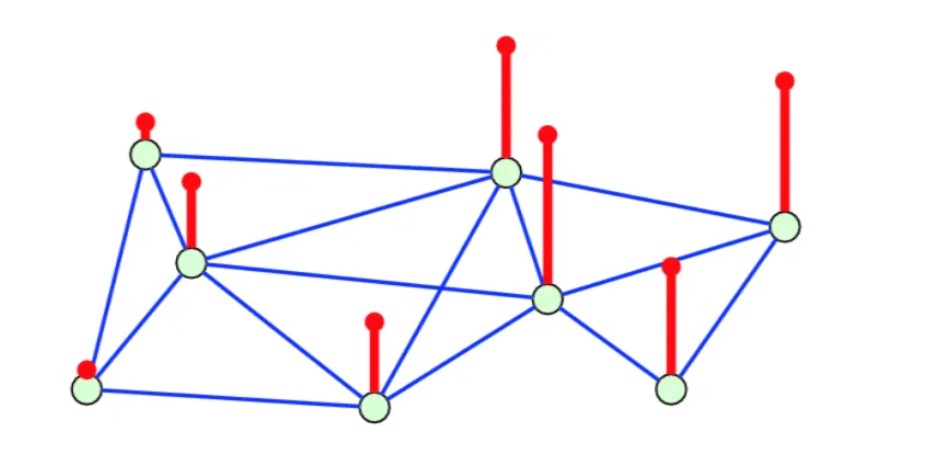

Graph Signal. Source: https://link.springer.com/chapter/10.1007/978-3-030-03574-7_1

For example, let us consider a state which has 8 cities. Suppose we need to represent the number of automobiles in each city. As shown in the figure, we can represent this information as a graph. Each light green vertex represents a city and its location in the state. The route between the two connected cities might be interpreted as the boundaries separating the cities. The number of vehicles, a scalar value, is represented by the red vertical line. Therefore, as the red line rises, so does the city’s automotive population.

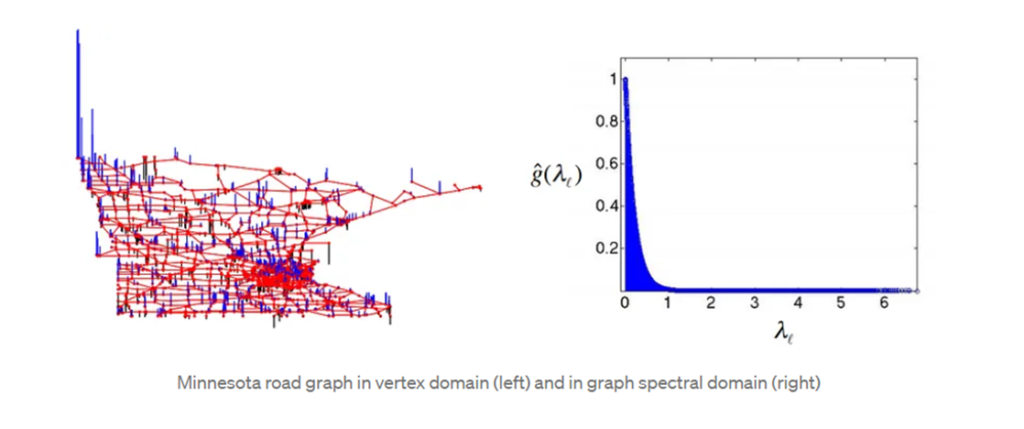

Graphs offer the ability to model such complex data and interactions between them. The data can be modeled into nodes and edges. Graph signal processing introduces exploration and analysis of such nodes and edges by adding attributes and modeling those as signals on a graph. For example, the temperature in a given city on a given day in a weather network. Representing such data into graphs requires us to extend classical signal processing concepts and tools such as Fourier Transform, Filtering, and frequency response to data residing on Graphs. It also leads us to tackle complex tasks such as sampling in a principled way.

Classical signal processing signals can stem from various domains. However, the underlying graphs can tell a fair amount about those signals through their structure. Different types of Graphs model different types of data and complex networks. Such graphs include Erdos-Renyi graphs, ring graphs, random geometric graphs, small-world graphs, and scale-free graphs. As in classical signal processing, graph signals can have properties such as smoothness that needs to be appropriately defined. They can also have spectral representation. In particular, the Graph Fourier Transform allows us to develop intuition gathered in the classical setting and extend it to graphs; we can talk about the notions of frequency band limitedness, for example, we can filter graph signals. They can be sampled, we can denoise graph signals, learn their underlying structure, and model them.

Additionally, there is a lot of promise for Graph Signal Processing to be used in sensor networks, smart grids, medical neural networks, the internet of cars, and other areas. Graph Signal Processing techniques are frequently implemented on the network using a single processing center in a centralized fashion. The centralized processing solution offers the benefits of being simple to implement and flexible when scheduling resources.

However, when the scale of the network grows, the centralized approach’s computational complexity quickly rises, necessitating more expensive gear. Additionally, the network as a whole may become paralyzed if the central node is attacked from the outside.

References

[1] A. Ortega, P. Frossard, J. Kovačević, P. Vandergheynst, and J. M. F. Moura, “Graph Signal Processing: Overview, challenges, and applications.” [Online], 2018. Available: https://ieeexplore.ieee.org/document/8347162.

Read MoreQuantum Computing: Where We Are And The Path Ahead

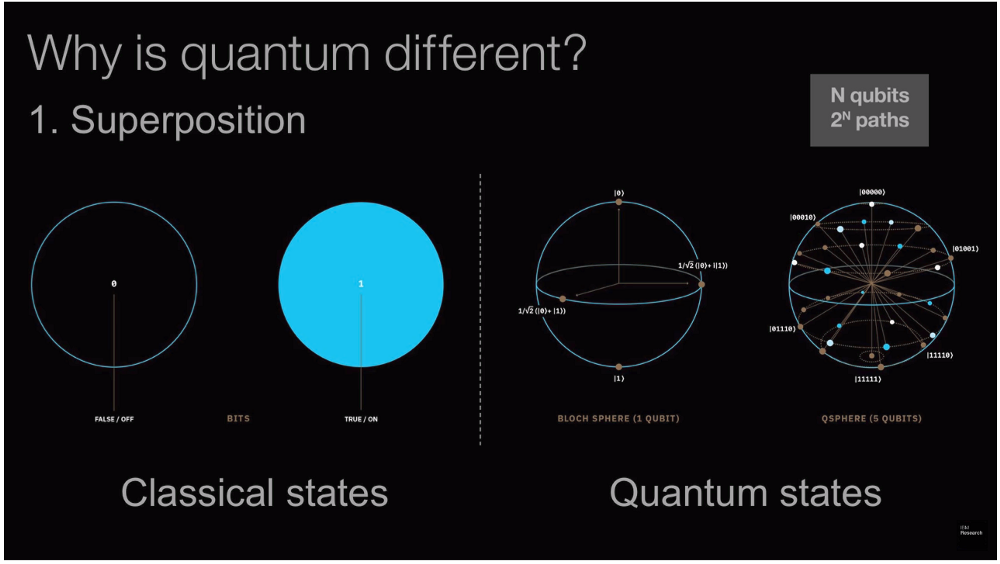

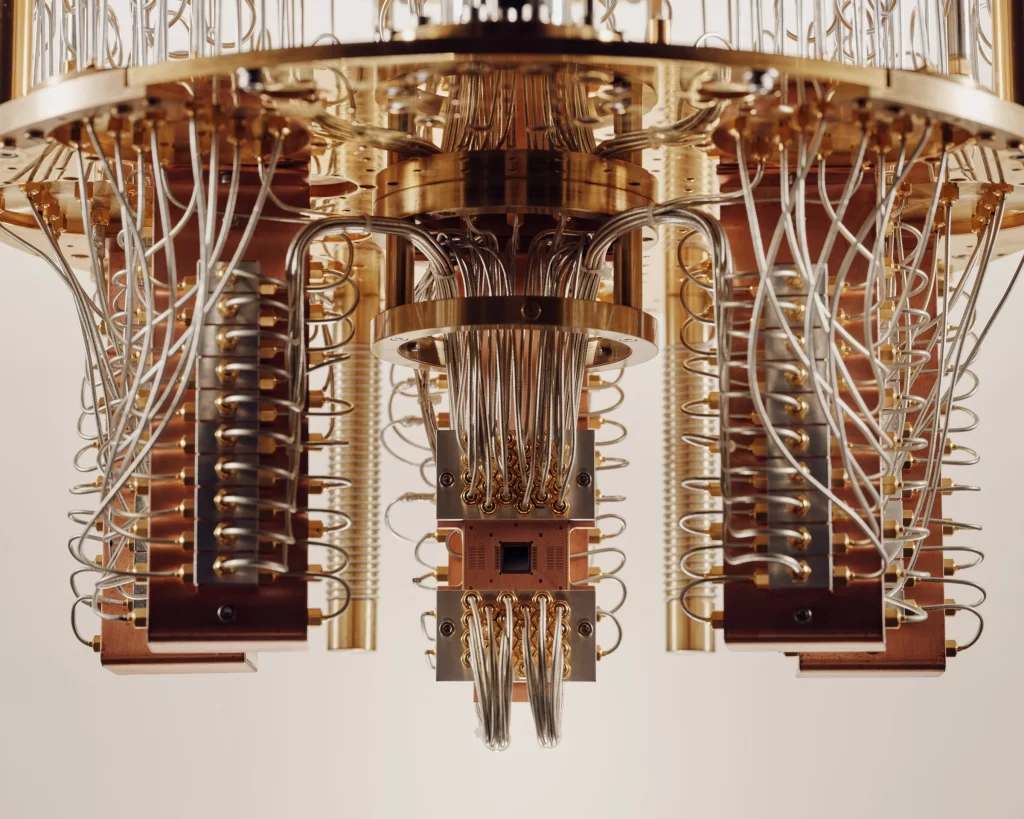

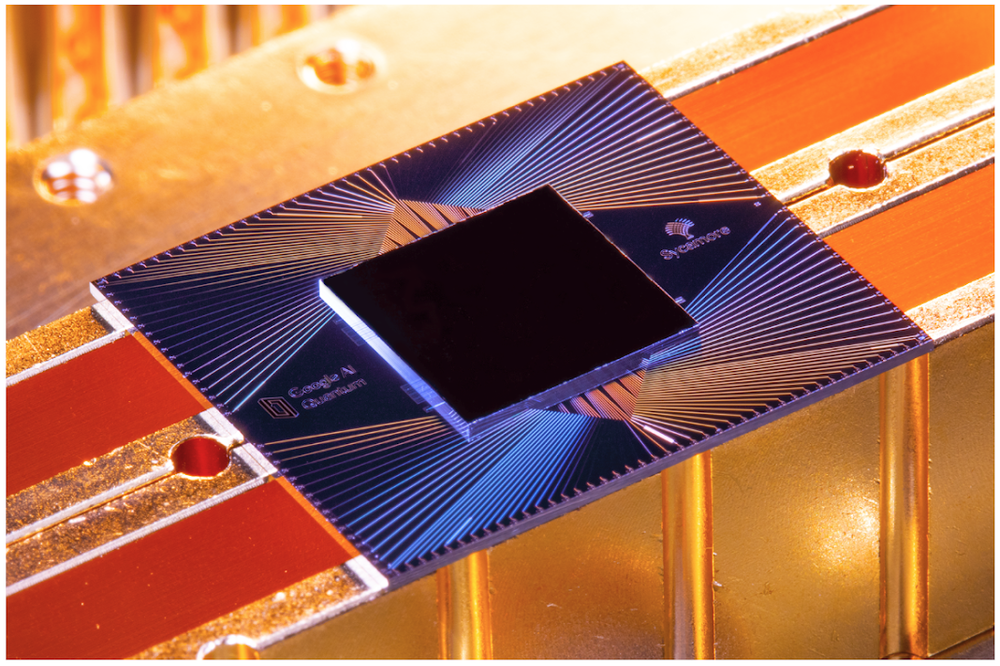

Quantum computing is a relatively new area that uses laws of quantum mechanics to build inner processing components of computers. The main difference between classical and quantum computers is that quantum computers use qubits rather than bits. A qubit could be 1,0 or both at once! (weighted combination of 1 and 0) while bit only could be either 1 or 0. In classical computing, bits are represented in various ways, such as high and low voltages, on and off states of transistors, charged and discharged states of capacitors, and many more. Similarly, qubits can be implemented in different ways. [1] Trapped ions (the first qubits were built using trapped ions in the mid-1990s) and superconducting circuits (used by IBM quantum) are the most used. Neutral atoms, defects in atom lattice (nitrogen impurities in a diamond lattice as an example), photons, and semiconductor molecules also can be used.

But why are quantum computers faster than classical computers? [2] The statement that “quantum computers are faster than classical computers” is only partially accurate. When dealing with complex problems, quantum computers are way faster than classical computers. But there can be instances where classical computers are more efficient. For example, consider where we have to figure out different ways protein molecules can fold. Classical computers try to find them using brute force algorithms. But even a protein molecule consisting of 100 amino acids could theoretically fold into trillion ways such that classical computers cannot practically solve this problem at a reasonable amount of speed. Quantum computers use different algorithms, which create multidimensional solution spaces where patterns linking individual data points emerge. In the case of the protein folding problem, the solution is the pattern that connects data points which takes the least energy. Since quantum computers use this multidimensional approach, they are way faster than classical computers.

Another leading aspect of quantum is encryption breaking. [3] In 1994, mathematician Peter Shor published an algorithm that has the potential to break most of the current cryptographic systems. Even though this algorithm poses a critical threat to many cryptographic systems, there is a drawback. It requires a quantum computer with millions of stable qubits. Current quantum computers only consist of a few hundred stable qubits. So current cryptographic systems are secure for now. Classical computers could not crack these public key encryption schemes like RSA because it requires a lot of time to do the calculations involving large prime numbers. Quantum computers use different algorithms to search number spaces parallelly to find these large prime numbers.

There are several companies involved in quantum computing. Xanadu, a Canadian company recognized for exploring photonic quantum computing, Toshiba’s Quantum Key Distribution program, which is working to secure network communications, and Righetti, which is experimenting with superconducting qubit technology, are some. Also, well-established tech companies such as Intel, Amazon, Google, and Microsoft have separate quantum computing research divisions. Since becoming the first to offer cloud-based quantum computing, IBM has done many developments in quantum computing. Their quantum computing roadmap shows many advancements.

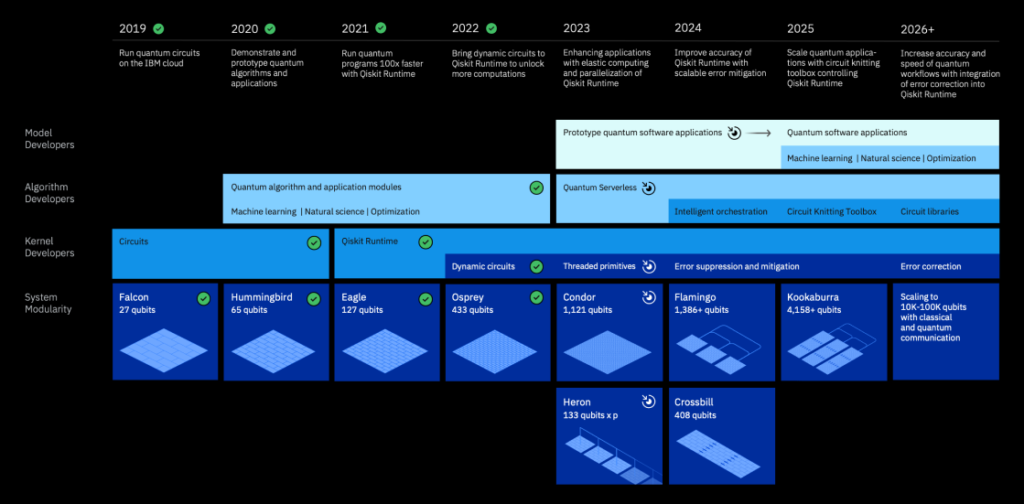

[4] In 2022, IBM updated its development roadmap to present an ambitious plan for scaling quantum systems beyond old limitations. Up to date, they have been able to hit planned milestones on time., and they are planning to do so in the future too. In 2022, they unveiled the 433-qubit Osprey processor, just one year after breaking the 100-qubit barrier with a 127-qubit Eagle chip. And this year, they are on track to deliver the 1,121-qubit Condor processor and control large systems. The key to solving the scalability problem is going beyond single-chip processors to multicore processors. Therefore, they are planning to introduce classical parallelized quantum computing with multiple Heron processors connected to a single control system. After succeeding in this initial step, they are planning to debut Crossbill, the first single processor made from multiple chips, in 2024. They will also unveil their Flamingo processor in the same year. This remarkable processor will be able to incorporate quantum communication links, allowing IBM to demonstrate a quantum system comprising three Flamingo processors totaling 1,386 qubits. They will be combining multi-chip processors and quantum communication technologies to create their Kookaburra processor by 2025. This leap forward will usher in a new era of scaling, providing a clear path to 100,000 qubits and beyond.

References

[1] C. Crockett, “More than one way to make a qubit,” symmetry magazine. https://www.symmetrymagazine.org/article/more-than-one-way-to-make-a-qubit

[2] IBM, “What is Quantum Computing? | IBM,” www.ibm.com, 2022. https://www.ibm.com/topics/quantum-computing

[3] R. Morrison, “Have Chinese scientists really cracked RSA encryption with a quantum computer?,” Tech Monitor, Jan. 06, 2023. https://techmonitor.ai/hardware/quantum-encryption-rsa-cryptography

[4] “IBM Quantum Computing | Roadmap,” www.ibm.com, Oct. 01, 2015. https://www.ibm.com/quantum/roadmap

Read More